import matplotlib.pyplot as plt

import numpy as np

import scipy.stats as st

from scipy.optimize import minimize

rng = np.random.default_rng(seed=1)Task 0¶

Play around with the jupyter notebook from the lecture and see if you can get better accuracy results.

Solution: My best accuracy was 0.76 with bp, s3 and s5

Task 1: Least-Squares Method Analytically¶

Given are 10 value pairs for from 1 to 10, where the are Poisson-distributed measurements around expectation values .

The parameter is to be estimated using the weighted least-squares method. To do this, consider the following functions:

1.1¶

Analytically calculate the estimators as a function of which minimize the corresponding functions. Are there special cases where the estimators are not defined?

1.2¶

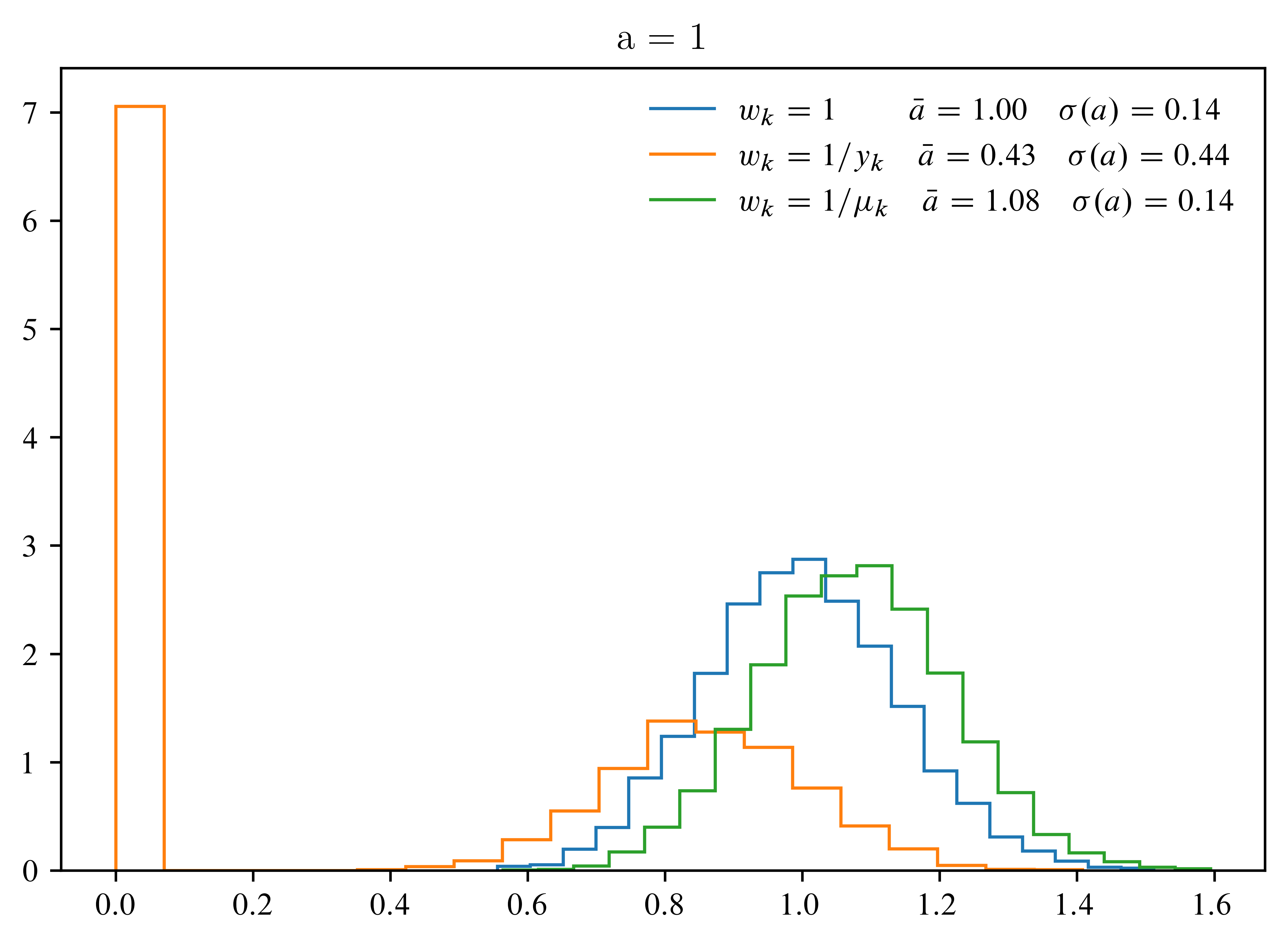

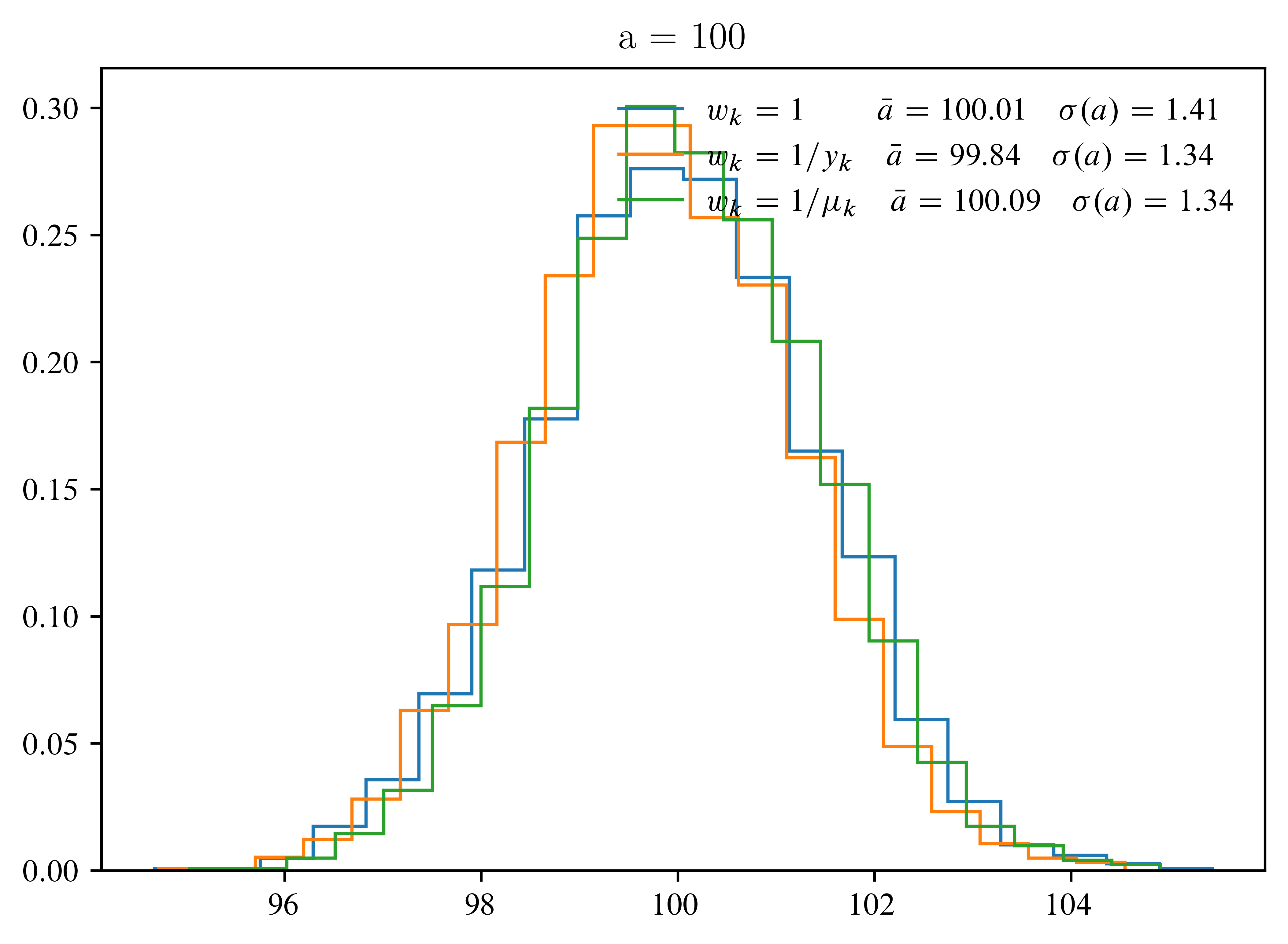

Compute the distributions of the estimators using Monte-Carlo simulation for and . Which estimator has the smallest bias and variance?

def generate(a, n=10, size=5000):

k = np.arange(1, n + 1) # Indices of the y_k, so [1,2,...,10]

y = rng.poisson(

a * k, size=(size, n)

) # draw size times n (=len(ks)) poisson numbers with mean a*k

return k, y

estimators = {

# lambda: anonymous functions

"1\\;\\quad": lambda k, y: np.sum(y * k) / np.sum(k**2),

# this can go wrong for small a (as y=0)

"1/y_k": lambda k, y: np.sum(k) / np.sum(k**2 / y),

# this will go wrong for a = 0

"1/\\mu_k": lambda k, y: np.sqrt(np.sum(y**2 / k) / np.sum(k)),

}

for a in (1, 100):

k, y = generate(a)

plt.figure(figsize=(5.5, 4), layout="constrained")

for label, estim in estimators.items():

with np.errstate(divide="ignore"):

ab = [estim(k, yb) for yb in y]

ma = np.mean(ab)

sa = np.std(ab)

w, xe = np.histogram(ab, bins=20)

w = w / np.sum(w) / np.diff(xe)

plt.stairs(

w,

xe,

label=f"$w_k = {label} \\quad \\bar{{a}} = {ma:3.2f} \\quad \\sigma(a) = {sa:.2f}$",

)

plt.title(f"a = {a}")

plt.legend(frameon=False, loc="upper right")